|

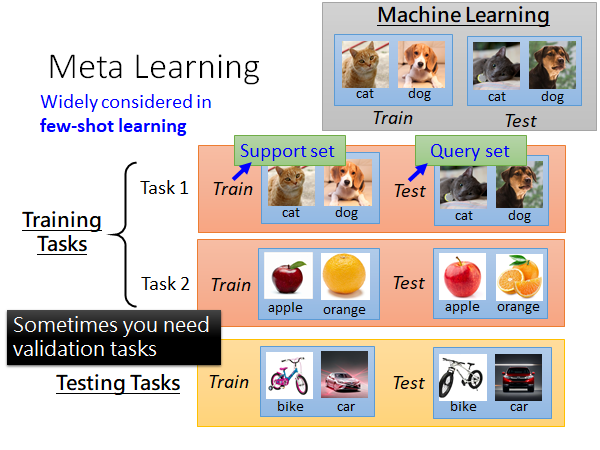

To counter such an issue, the field of meta-learning has shown great potential in fine tuning and generalizing to new tasks using mini dataset. Klaus Obermayer provided feedback on the project.Conventional training mechanisms often encounter limited classification performance due to the need of large training samples. He also wrote parts of the manuscript and helped with finalizing the document. Thomas Goerttler came up with the idea and sketched out the project. Both wrote the introduction together and contributed most of the text of the other parts. Max Ploner created the visualization of iMAML and the svelte elements and components. Luis Müller implemented the visualization of MAML, FOMAML, Reptile and the Comparision. "model-agnostic" or go straight to an explanation of MAML. Having set the scene, we can now dig into MAML and its Lot of classes but only a few instances for each. With MNIST containing only a few classes (the digits 0 to 9) and many instances and Omniglot containing a Because of that, the original authorsĭescribed it as a "transpose" of the well-known MNIST dataset , It contains 1623 different characters across 50 alphabets, with each characterīeing represented by 20 instances, each drawn by a different person.

Omniglot contains 1623 different characters across 50 alphabets, with each characterīeing represented by 20 instances, each drawn by a different person. Prominent example of a few-shot learning task, whose symbols are The small exercise from the beginning, which we offer either as a \(20\)- or \(5\)-way-1-shot problem, is a If you are presented with \(N\) samples and are expected to learn a classificationĬlasses, we speak of an \( M \)-way-\(N\)-shot problem.

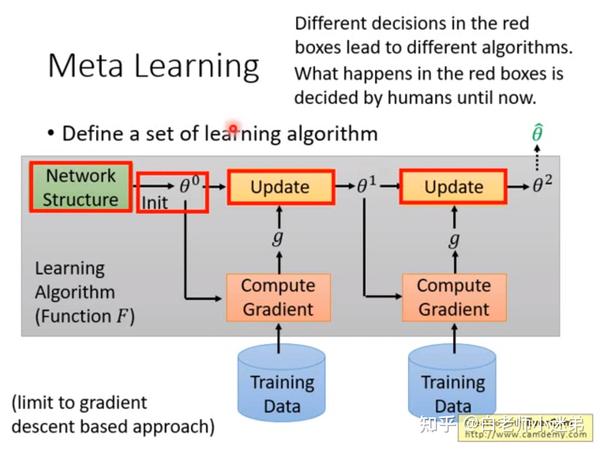

The learning of this meta-knowledge is called "meta-learning".Īchieving rapid convergence of machine learning models on a few samples is known as

Not obtained from a single task but the distribution of tasks. This similarity assumption allows the model to collect meta-knowledge The idea in its core is to derive an inductive biasįrom a set of problem classes to perform better on other, newly encountered, problem-classes. We can pretrain models on tasks that we assume to be similar to the target tasks. While clearly, one sample is not enough for a model without prior knowledge, While introducing these two fields to you, weĪlso equip you with the most important terms and concepts we will need along the rest of the article. In two fields that each address one of the above requirements. Model-agnostic meta-learning, a method commonly abbreviated as MAML, will be the central topic of this article.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed